ORB算法描述与匹配

视频演示如下:

在本章节中给大家演示ORB算法描述与匹配的操作,C++、C#版本是使用毛星云的代码流程。这里要注意一下,在demo中涉及到了一个Flann中的knnSearch操作,经过博主万般查询,始终没有找到Opencv4中的Python版本操作方法,网上全是OpenCv3 Pythpn的代码片段,所以博主现在只能暂时将Python版本的留空,等后续找到了,再补充进来。如有小伙伴能提供相关demo,请在留言中提供链接,谢谢!!

ORB的算法描述和SURF、SIFT其实功能是同类型的,在B站有一篇关于 Python Opencv ORB 的相关介绍,感觉说的不错,大伙儿可以参考一下:https://www.bilibili.com/read/cv7620346

当前系列所有demo下载地址:

https://github.com/GaoRenBao/OpenCv4-Demo

不同编程语言对应的OpenCv版本以及开发环境信息如下:

语言 | OpenCv版本 | IDE |

C# | OpenCvSharp4.4.8.0.20230708 | Visual Studio 2022 |

C++ | OpenCv-4.5.5-vc14_vc15 | Visual Studio 2022 |

Python | OpenCv-Python (4.6.0.66) | PyCharm Community Edition 2022.1.3 |

ORB操作由于和SURF、SIFT操作很像,都属于特征匹配的一种,所以我们也需要准备一张用于特征匹配的原图。C++版本和C#版本效果是一样的,下面以C#版本为例子作为展示:

C# 版本涉及到的相关操作可以参考的官方代码位置如下,并不是所有的官方代码都有这两个问题,博主已经将这两个文件打包进工程路径下面了,供小伙伴们参考。

Sample-4.1.0-20190417\SamplesCS\Samples\FREAKSample.cs

Sample-4.1.0-20190417\SamplesCS\Samples\FlannSample.cs

下面给出了C#版本中两个官方代码的演示效果。

FREAKSample.cs文件中主要展示了ORB的操作方法,具体效果如下:

两张原图如下:

效果如下:

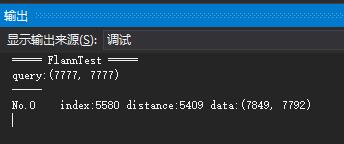

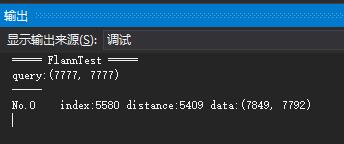

FlannSample.cs文件中主要展示了flann 中KnnSearch的操作方法,该演示没有图像效果,只有输出数据,输出内容如下:

C#版本代码如下(包含官方代码,demo1和demo2):

using OpenCvSharp;

using OpenCvSharp.Flann;

using OpenCvSharp.XFeatures2D;

using System.Collections.Generic;

using System;

namespace demo

{

internal class Program

{

static void Main(string[] args)

{

demo1();

//demo2();

//demo3();

}

/// <summary>

/// FREAKSample

/// </summary>

private static void demo1()

{

var gray = new Mat("../../../images/book1.jpg", ImreadModes.Grayscale);

var dst = new Mat("../../../images/book2.jpg", ImreadModes.Color);

// ORB

var orb = ORB.Create(1000);

KeyPoint[] keypoints = orb.Detect(gray);

// FREAK

FREAK freak = FREAK.Create();

Mat freakDescriptors = new Mat();

freak.Compute(gray, ref keypoints, freakDescriptors);

if (keypoints != null)

{

var color = new Scalar(0, 255, 0);

foreach (KeyPoint kpt in keypoints)

{

float r = kpt.Size / 2;

Cv2.Circle(dst, (Point)kpt.Pt, (int)r, color);

Cv2.Line(dst,

(Point)new Point2f(kpt.Pt.X + r, kpt.Pt.Y + r),

(Point)new Point2f(kpt.Pt.X - r, kpt.Pt.Y - r),

color);

Cv2.Line(dst,

(Point)new Point2f(kpt.Pt.X - r, kpt.Pt.Y + r),

(Point)new Point2f(kpt.Pt.X + r, kpt.Pt.Y - r),

color);

}

}

// Cv2.ImWrite("FREAKSample.jpg", dst);

using (new Window("FREAK", dst))

{

Cv2.WaitKey();

}

}

/// <summary>

/// FlannSample

/// </summary>

private static void demo2()

{

Console.WriteLine("===== FlannTest =====");

// creates data set

using (var features = new Mat(10000, 2, MatType.CV_32FC1))

{

var rand = new Random();

for (int i = 0; i < features.Rows; i++)

{

features.Set<float>(i, 0, rand.Next(10000));

features.Set<float>(i, 1, rand.Next(10000));

}

// query

var queryPoint = new Point2f(7777, 7777);

var queries = new Mat(1, 2, MatType.CV_32FC1);

queries.Set<float>(0, 0, queryPoint.X);

queries.Set<float>(0, 1, queryPoint.Y);

Console.WriteLine("query:({0}, {1})", queryPoint.X, queryPoint.Y);

Console.WriteLine("-----");

// knnSearch

using (var nnIndex = new Index(features, new KDTreeIndexParams(4)))

{

const int Knn = 1;

int[] indices;

float[] dists;

nnIndex.KnnSearch(queries, out indices, out dists, Knn, new SearchParams(32));

for (int i = 0; i < Knn; i++)

{

int index = indices[i];

float dist = dists[i];

var pt = new Point2f(features.Get<float>(index, 0), features.Get<float>(index, 1));

Console.Write("No.{0}\t", i);

Console.Write("index:{0}", index);

Console.Write(" distance:{0}", dist);

Console.Write(" data:({0}, {1})", pt.X, pt.Y);

Console.WriteLine();

}

}

}

Console.Read();

}

/// <summary>

/// 毛星云demo

/// </summary>

private static void demo3()

{

//【0】初始化视频采集对象

VideoCapture Cap = new VideoCapture();

Cap.Open(0);

// 判断摄像头是否成功打开

if (!Cap.IsOpened())

{

Console.WriteLine("摄像头打开失败.");

Console.Read();

return;

}

//【1】载入源图,显示并转化为灰度图

Mat srcImage = new Mat();

Cap.Read(srcImage);

Cv2.ImShow("原始图", srcImage);

Mat grayImage = new Mat();

Cv2.CvtColor(srcImage, grayImage, ColorConversionCodes.BGR2GRAY);

//------------------检测ORB特征点并在图像中提取物体的描述符----------------------

//【2】参数定义

var featureDetector = ORB.Create();

//【3】调用detect函数检测出特征关键点,保存在KeyPoint容器中

KeyPoint[] keyPoints = featureDetector.Detect(grayImage);

//【4】计算描述符(特征向量)

Mat descriptors = new Mat();

featureDetector.Compute(grayImage, ref keyPoints, descriptors);

//【5】基于FLANN的描述符对象匹配

var flannIndex = new Index(descriptors, new LshIndexParams(12, 20, 2), FlannDistance.Hamming);

//【6】轮询,直到按下ESC键退出循环

Mat captureImage = new Mat();

Mat captureImage_gray = new Mat();

Mat captureDescription = new Mat();

while (true)

{

if (!Cap.Read(captureImage))

continue;

//转化图像到灰度

Cv2.CvtColor(captureImage, captureImage_gray, ColorConversionCodes.BGR2GRAY);//采集的视频帧转化为灰度图

//【7】检测SIFT关键点并提取测试图像中的描述符

//【8】调用detect函数检测出特征关键点,保存在vector容器中

KeyPoint[] captureKeyPoints = featureDetector.Detect(captureImage_gray);

//【9】计算描述符

featureDetector.Compute(captureImage_gray, ref captureKeyPoints, captureDescription);

//【10】匹配和测试描述符,获取两个最邻近的描述符

Mat matchIndex = new Mat(captureDescription.Rows, 2, MatType.CV_32SC1);

Mat matchDistance = new Mat(captureDescription.Rows, 2, MatType.CV_32FC1);

//调用K邻近算法

flannIndex.KnnSearch(captureDescription, matchIndex, matchDistance, 2, new SearchParams());

//【11】根据劳氏算法(Lowe's algorithm)选出优秀的匹配

List<DMatch> goodMatches = new List<DMatch>();

for (int i = 0; i < matchDistance.Rows; i++)

{

if (matchDistance.Get<float>(i, 0) < 0.6 * matchDistance.Get<float>(i, 1))

{

DMatch dmatches = new DMatch(i, matchIndex.Get<int>(i, 0), matchDistance.Get<float>(i, 0));

goodMatches.Add(dmatches);

}

}

//【12】绘制并显示匹配窗口

Mat resultImage = new Mat();

Cv2.DrawMatches(captureImage, captureKeyPoints, srcImage, keyPoints, goodMatches, resultImage);

// 显示效果图

Cv2.Resize(resultImage, resultImage, new Size(resultImage.Cols * 0.5, resultImage.Rows * 0.5), 0, 0, InterpolationFlags.Area);

Cv2.ImShow("匹配窗口", resultImage);

// 按下ESC键,则程序退出

if ((char)Cv2.WaitKey(1) == 27)

break;

}

}

}

}

C++版本代码如下:

#include <opencv2/opencv.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/features2d.hpp>

#include <opencv2/features2d/features2d.hpp>

using namespace cv;

using namespace std;

int main()

{

//【0】初始化视频采集对象

VideoCapture cap(0);

// 判断摄像头是否成功打开

if (!cap.isOpened())

{

cout << "摄像头打开失败" << endl;

return 0;

}

Mat srcImage;

cap >> srcImage;

//【1】载入源图,显示并转化为灰度图

//Mat srcImage = imread("1.jpg");

imshow("原始图", srcImage);

Mat grayImage;

cvtColor(srcImage, grayImage, COLOR_BGR2GRAY);

//------------------检测ORB特征点并在图像中提取物体的描述符----------------------

//【2】参数定义

Ptr<ORB> featureDetector = ORB::create();

vector<KeyPoint> keyPoints;

Mat descriptors;

//【3】调用detect函数检测出特征关键点,保存在vector容器中

featureDetector->detect(grayImage, keyPoints);

//【4】计算描述符(特征向量)

featureDetector->compute(grayImage, keyPoints, descriptors);

//【5】基于FLANN的描述符对象匹配

flann::Index flannIndex(descriptors, flann::LshIndexParams(12, 20, 2), cvflann::FLANN_DIST_HAMMING);

//【6】轮询,直到按下ESC键退出循环

while (1)

{

double time0 = static_cast<double>(getTickCount());//记录起始时间

Mat captureImage, captureImage_gray;//定义两个Mat变量,用于视频采集

cap >> captureImage;//采集视频帧

if (captureImage.empty())//采集为空的处理

continue;

// 保存原图用

//imshow("1.jpg", captureImage);

//imwrite("1.jpg", captureImage);

//waitKey(0);

//continue;

//转化图像到灰度

cvtColor(captureImage, captureImage_gray, COLOR_BGR2GRAY);//采集的视频帧转化为灰度图

//【7】检测SIFT关键点并提取测试图像中的描述符

vector<KeyPoint> captureKeyPoints;

Mat captureDescription;

//【8】调用detect函数检测出特征关键点,保存在vector容器中

featureDetector->detect(captureImage_gray, captureKeyPoints);

//【9】计算描述符

featureDetector->compute(captureImage_gray, captureKeyPoints, captureDescription);

//【10】匹配和测试描述符,获取两个最邻近的描述符

Mat matchIndex(captureDescription.rows, 2, CV_32SC1), matchDistance(captureDescription.rows, 2, CV_32FC1);

flannIndex.knnSearch(captureDescription, matchIndex, matchDistance, 2, flann::SearchParams());//调用K邻近算法

//【11】根据劳氏算法(Lowe's algorithm)选出优秀的匹配

vector<DMatch> goodMatches;

for (int i = 0; i < matchDistance.rows; i++)

{

if (matchDistance.at<float>(i, 0) < 0.6 * matchDistance.at<float>(i, 1))

{

DMatch dmatches(i, matchIndex.at<int>(i, 0), matchDistance.at<float>(i, 0));

goodMatches.push_back(dmatches);

}

}

//【12】绘制并显示匹配窗口

Mat resultImage;

drawMatches(captureImage, captureKeyPoints, srcImage, keyPoints, goodMatches, resultImage);

imshow("匹配窗口", resultImage);

//【13】显示帧率

cout << ">帧率= " << getTickFrequency() / (getTickCount() - time0) << endl;

//按下ESC键,则程序退出

if (char(waitKey(1)) == 27) break;

}

return 0;

}

Python版本代码如下(跑不了,未解决):

import cv2

import numpy as np

# 载入源图,显示并转化为灰度图

srcImage1 = cv2.imread("1.jpg")

cv2.imshow("srcImage1", srcImage1)

srcImage1_gray = cv2.cvtColor(srcImage1,cv2.COLOR_BGR2GRAY)

# ------------------检测ORB特征点并在图像中提取物体的描述符----------------------

# 参数定义

orb = cv2.ORB_create();

# 计算描述符(特征向量)

(kp1, des1) = orb.detectAndCompute(srcImage1_gray, None)

des1 = np.array(des1, np.float32)

flannIndex = cv2.flann_Index(des1, dict(algorithm=0, trees=5))

Cap = cv2.VideoCapture(0)

# 判断视频是否打开

if (Cap.isOpened() == False):

print('Open Camera Error.')

else:

while True:

grabbed, srcImage2 = Cap.read()

if srcImage2 is None:

continue

# 转化图像到灰度

srcImage2_gray = cv2.cvtColor(srcImage2, cv2.COLOR_BGR2GRAY)

# 计算描述符(特征向量)

(kp2, des2) = orb.detectAndCompute(srcImage2_gray, None)

des2 = np.array(des2, np.float32)

# 调用K邻近算法

idx2, matchDistance = flannIndex.knnSearch(des2, 2, params = {})

#舍弃大于0.6的匹配

goodMatches = []

for m,n in matchDistance:

#if m.distance < 0.7 * n.distance:

goodMatches.append(m)

#画出匹配关系

dstImage = cv2.drawMatches(srcImage2,kp2,srcImage1, kp1,goodMatches,None)

cv2.imshow("dstImage", dstImage)

cv2.waitKey(1) # 设置延迟时间

cv2.waitKey(0)

cv2.destroyAllWindows()